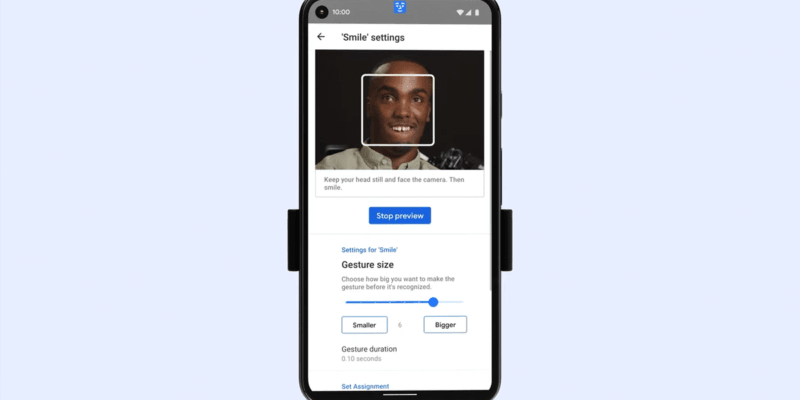

Google has now confirmed that using gestures for people with speech or physical disabilities is now possible on Android. This is thanks to two new tools that put machine learning and front-facing cameras on smartphones to work detecting face and eye movements. Users can scan their phone screen and select a task by simply smiling, raising eyebrows, mouth or looking to the left, right or up.

“To make Android more accessible for everyone, we’re launching new tools that make it easier to control your phone and communicate using facial gestures,” Google said.

This has been part of Google’s plans for some time now under the recommendations of the Centers for Disease Control and Prevention. This initiative has pushed not just Google but also Apple and Microsoft to create products and services more accessible for persons with disabilities.

“Every day, people use voice commands, like ‘Hey Google’, or their hands to navigate their phones,” the tech giant said in a blog post.

“However, that’s not always possible for people with severe motor and speech disabilities.”

These changes are said to be the result of two new features, one of which is Camera Switches. This lets people use their faces instead of swipes and taps to interact with smartphones.

The other is Project Activate. This is a new app designed to allow people to use those gestures to trigger an action. This includes having the phone play a recorded phrase, send a text, or make a call.

“Now it’s possible for anyone to use eye movements and facial gestures that are customised to their range of movement to navigate their phone — sans hands and voice,” Google said.

Comments